Alexa Skills: what skills types are available to developers?

Alexa Skills: for the developer there are a total of 5 different types of skills, which are intended for different purposes. This article presents them.

In the following we will get to know the individual types and learn something about their structure. The following article is an excerpt from my book “Developing Alexa Skills“. Click on the picture to buy the book on Amazon from 8,99€. It’s worth it for developers and those who want to become developers.

Contents

Alexa Skills: what skills types are available to developers?

To start with Alexa as a developer, you first have to understand the different ways you can approach ASK (Alexa Skills Kit, here) (ASK is described earlier in the book). In ASK, the skill types mentioned above are all somewhat different. Both Flash Briefing Skills and Smart Home Skills can be created with the help of an API. For them, APIs already exist that allow less control over the skill, but also simplify the development, since Amazon has already done a lot of preliminary work. In general, all skills are hosted either on their own web server or in AWS Lambda. Since the beginning of 2019 Amazon also offers the “Alexa-Hosting”, where the skill is hosted via Alexa until reaching the free limits in AWS. The lambda function can also be found in the Alexa developer console.

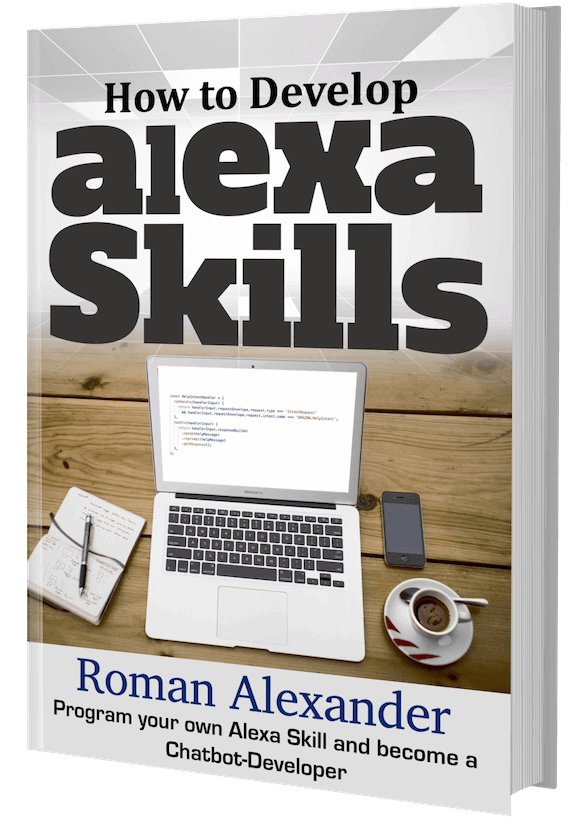

Flash Briefing Skills

use either an RSS or JSON feed that contains the new elements read as part of the Flash Briefing. Such skills are easy to develop and are good for newspapers and bloggers. The skill service in this case is not a lambda function, but the RSS or JSON feed.

Illustration: Schematic representation of a Flash Briefing Skill

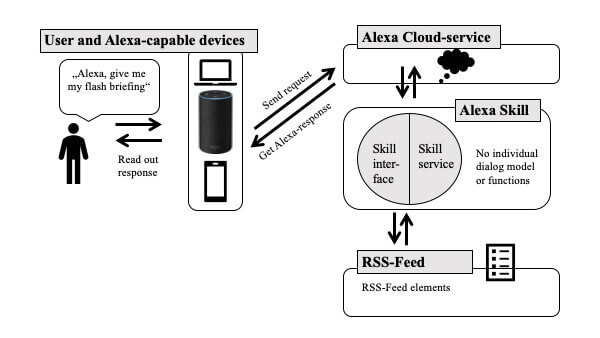

Smart Home Skills

These skills have a user interface (API) that requires an AWS Lambda function that acts as an adapter for integrating the terminal. At the same time, such skills require account linking integration that allows the end user to link their Amazon Alexa account to the Smart Home Appliance account for authenticated control of smart home devices.

Illustration: Schematic representation of a Smart Home Skill

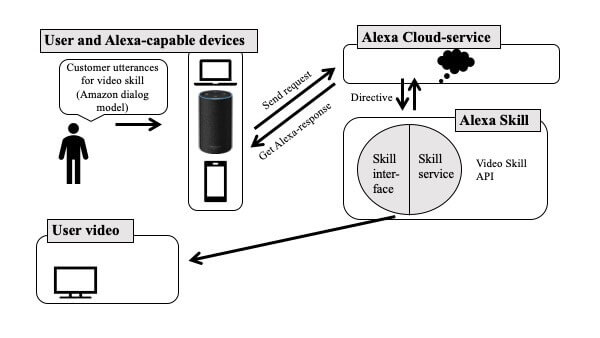

Video Skills

Video skills are designed to allow users to find and view videos (and movies) without having to call up a particular skill. For example, a customer might say “Alexa, play Batman returns” without specifying a supplier or device. Through the Video Skill API, Alexa is informed about video devices and services that a user owns or subscribes to, allowing them to show videos.

The Video Skill API intelligently guides users to the content they want. In contrast, custom skills require the user to call a skill by name, and the results of custom skill searches are not able to include other services or devices of the user.

A video provider can use the Video Skill API to create an Alexa skill to include all its videos in Alexa’s content catalog. Alexa then understands the content provider. When the user says, “Alexa, play Batman returns,” Alexa identifies the provider and loads the video. Without the Video Skill API, the user would need to know which provider offers the movie (if he uses multiple providers such as Amazon and Netflix), have the Custom Skill enabled, and then ask that skill for the content: “Alexa, please Netflix, play Batman returns”.

Illustration: Schematic representation of a Video Skill

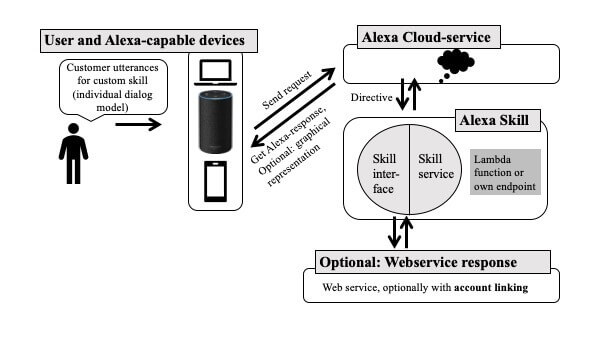

Custom Skills

These are the most flexible, but also the most complex variant, since the developer has to provide the interaction model himself. The interaction model is essentially the “conversation”, the dialogue between Alexa and the user. It represents the different ways in which users ask their questions, how Alexa collects the information, and how Alexa answers the query.

Custom Skills are hosted either in AWS Lambda, at Amazon Alexa or in a self defined HTTPS-enabled web server. However, Amazon must check its own server in order to be equipped with the necessary certificate.

Custom skills also support syntax for custom slots (which are basically data types), so developers can go beyond the types built in by Amazon. Such skills can be used, for example, to tell users the status of the BART transit system in San Francisco. Custom skills must be activated separately by the user and additionally have the condition that the skill name must be mentioned before each call.

Figure: Schematic representation of a custom skill

Baby activity skills

Since the end of 2018 the Baby activity skills are newly introduced. These skills use the Alexa.Health API, which allows users to manage health data for themselves, their children and other family members by talking to Alexa. When using this API, the language interaction model is already predefined. However, the Health API will only be available to US users in early 2019. Some examples could be:

- Alexa, record sleep for three hours starting at two pm.

- Alexa, how long did my baby sleep yesterday?

- Alexa, log a formula feeding of three ounces at two pm.

- Alexa, how much has my baby eaten today?

- Alexa, record a dirty diaper at three pm.

The Alexa.Health API requires customer data and customer identification via account linking with another app or website.

The Architecture of a Custom Skills in the AWS Context

Alexa skills are very much integrated into the AWS architecture, so here is a diagram to show these services:

Which programming languages are used?

For AWS Lambda you can write functions Node.js, Java or Python, while an own webservice can be created in any suitable language, as long as the Amazon API can be reached with it. A Software Development Kit (SDK) is available for each of the three programming languages. [1] A C# SDK is available for devices that rely on the Alexa Voice Service. [2] The Speech Synthesis Markup Language (SSML) is also helpful when dealing with language files. [3] With SSML you can customize Alexa’s answers yourself, otherwise Alexa does the answer with her own standard procedure.

For custom skills, note that there are specific formats for the answer returned by the skill. For example, a JSON response is limited to 8000 characters in the source language and 24kB. When developing a customs skill, it is very important to know the principles of designing language interfaces. Ideally, this avoids so-called “unhappy paths”, i.e. conversation paths that do not lead to a meaningful end.

[1] https://alexa-skills-kit-sdk-for-java.readthedocs.io/en/latest/index.html / https://alexa-skills-kit-python-sdk.readthedocs.io/en/latest/index.html / https://ask-sdk-for-nodejs.readthedocs.io/en/latest/index.html

[2] https://github.com/alexa/avs-device-sdk

[3] https://developer.amazon.com/en/docs/custom-skills/speech-synthesis-markup-language-ssml-reference.html

The Manual for Alexa Skill Development

If the article interested you, check out the manual for Alexa Skill Development on Amazon, or read the book’s page. On Github you can find all code examples used in the book.