Alexa Skills: How to test a skill

Alexa Skills: How to test a skill? This article describes nine ways to test and monitor Alexa Skills. There are many different approaches to Alexa testing.

The following article is an excerpt from my book “Developing Alexa Skills“. Click on the picture to buy the book on Amazon from 8,99€. It’s worth it for developers and those who want to become developers.

Contents

Alexa Skills: How to test a skill

When developing skills, it is incredibly important to create quality experiences for users. Users won’t come back if the skill doesn’t open or gets stuck somewhere in the dialog. Amazon tests custom skills, but the many 1-star ratings in the Skills Store show that incorrect skills still get through. Here is just one example for the skill with the ASIN B07MZ94ZSS (“Deal or no deal”):

Meanwhile the skill has collected good ratings again, but such an old rating still stands later as one of the first results in the ratings. To avoid this, intensive, (partially) automated tests are the solution. They help to reliably develop a skill well. There are several ways to test and debug Alexa skills:

- Classic debugging in code is worthwhile for general, non-Alexa-specific topics. Depending on Python, JavaScript and C# there are different commands.

- With the utterance profiler you can test the interaction model.

- The simulator on the test page in the developer console allows extensive testing with most Alexa Skills Kit features without a device. You can interact with Alexa either with voice or text, or enter a test-json file.

- End-to end testing: Speech test with an Alexa-enabled device (or via https://echosim.io).

- With ASK CLI you can test the skill from the command line. You can test your skill with ASK CLI commands like invoke-skill and simulate-skill.

- Before a release, automated unit tests with the Mocha framework are useful.

- Automated tests can be extended with the Alexa-Testflow framework to send certain json requests.

- Further steps to professionalize testing are continuous integration (CI) and continuous development (CD).

- Live skills are best controlled with AWS Lambda Monitoring.

Depending on what you want to test, a combination of these tools makes sense. The important part is the use of manual tests to check the essential aspects of the skill, especially the interaction model, as there are almost always corrections to an initial language design.

Automated unit testing

This is the next essential aspect of testing and automation. The goal of unit testing is to see if the skill code works correctly. Such tests are especially useful when there are frequent new releases. Component tests are written to check every intent and every important part of the functionality for a given result.

There are many common frameworks for unit testing. For Node.js/JavaScript projects, Mocha and Chai are two of the most popular. Mocha is a unit test framework (it actually performs the unit tests), while Chai is an assertion framework (it provides functions for comparing actual and expected results).

General recommendations for testing:

- Test the Invocation name: It should be easy to say and consistently recognized by Alexa.

- Offset when activating new utterances: When setting new utterances Alexa sometimes needs some time to reload.

- Test Variations: It is best to test many utterances with different slot values and different phrases, even if they sound similar. Especially the given example phrases should work.

- Test user errors: For intents with slots, you should also pay attention to missing or wrong slot values.

- For example, the code should react correctly if the user says things for these slots with built-in slot types such as AMAZON.DATE, AMAZON.NUMBER or AMAZON.DURATION, cannot be converted to the specified data type.

- Use the Alexa app for testing: the cards and history can be displayed very well here. In addition to the cards created by the skill, Alexa sends back cards in response to errors in communicating with the skill.

Classic Debugging

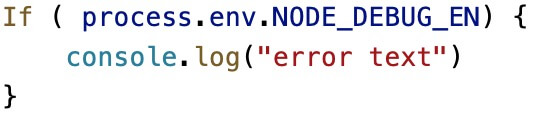

In the Javascript code is one possibility is to use console.log(“Text”) to insert an error message at certain positions. This message can be enriched, for example with the intent that called it.

Alternatively you can log the whole request with console.log(JSON.stringify(event, null, 2)). This can also be used for responses. Console logging, however, is visible to anyone executing the build. It is therefore better to include a check with an environment variable that is called in an If function:

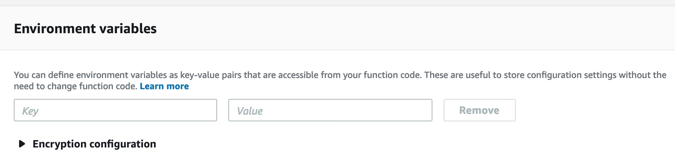

You can always set this environment bariable when executing the script. If you execute scripts in AWS-Lambda, you can also set environment variables there:

Testing with the utterances profiler

As soon as it comes to content tests, the utterances profiler makes sense. It is in the “Build” tab at the top right:

The utterance profiler is very practical when creating an interaction model. It points to the input of sentences, how Alexa would interpret them in the system, which intent Alexa would map to the sentence and which slots Alexa would fill. Since Alexa does not map 1:1 sentences exactly to utterances, similar sounding sentences can be mapped to an utterance that has been created, or Alexa can select a different intent. The profiler displays the result under “selected intent”, other intents are displayed under “Other considered intents“. If the selected intent is not the intent with the slot you expect, you need to update your interaction model.

However, the profiler does not give an answer, but only shows what input would be provided to the skill. Therefore it doesn’t need an endpoint, but you can’t test dialogs that are created at runtime. The profiler is available as soon as you have defined an interaction model.

For skills with a delegated dialog model, the dialog act is also shown in the right column under “Next dialog act”, i.e. the step in the dialog model that is to be executed next. These can be the functions “ElicitSlot”, “ConfirmSlot” or “ConfirmIntent”.

For a skill for booking a trip with the Intent PlanMyTripIntent and the required slots: fromCity, toCity and travelDate, this could look like this:

- User: “Alexa, tell Plan my Trip that I’m going on a trip on Friday.”

- Alexa prompt: “From which city do you leave the city?” (Dialog act: ElicitSlot)

- User: “Seattle”

- Alexa prompt: “Which city are you going to?” (Dialog act: ElicitSlot)

- User: “Chicago”

- Alexa prompt: “OK, I’m planning your trip from Seattle to Chicago on January 18, 2019. Is that correct?” (Dialog act: ConfirmIntent)

- User: “Yes”.

The dialog is now closed, so that the test is finished. The selected intention now shows PlanMyTrip with the three filled fields.

Testing with the Alexa Simulator

When the interaction model planned for the skill is ready, you can test it further in the developer console. Amazon has set up a simulator that is available in the “Test” tab. With it you can already test the skill without a terminal device. Here you can test both the current live version and the current development status.

A skill can only be in one level at a time. If the skill is activated for testing in the live phase, the skill will be deactivated in development for testing, and the live skill will still be available in the developer console, SMAPI, ASK CLI and all devices logged into the developer account. As part of this activation in the Developer Console, the page is redirected to the Live Phase URL and all session and context information is reset.

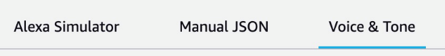

As input for the test page you can either enter your own text dialog (under Alexa Simulator), a test JSON (under Manual JSON) or enter an SSML under Voice & Tone.

- Alexa Simulator: The simulator shows the skill session exactly like a device, so that you can test the dialog flow and also sends all the cards that the skill returns to the Alexa app. If the skill supports multiple languages, select the test language from the drop-down list.

- Manual JSON: This is like testing a JSON request in the Lambda console. The Echo Show Display, Echo Spot and Device Log options are not supported for Manual JSON.

- Voice and Tone: Here you can enter plain text or SSML and hear Alexa speak the text. Again, you can select the language you want to hear from the list below.

Further options are:

- Skill I/O: this allows you to see the request and response in original JSON form for custom skills.

- Device display: Here you can see an approximate display of the skill screen. You can select: Small Hub (Echo Show = 480*480), Medium Hub (1024*600), Large Hub (1280*800), and extra large TV (1920*1200).

- Device log: If this option is enabled, the cloud log will log.

What can the simulator not test?

Unfortunately the simulator cannot test some things. At the beginning of 2019, it only supports custom skills, the display is not pixel-accurate, and nothing can be clicked in the simulator for visualizations. The complete list can be found here: https://developer.amazon.com/en/docs/devconsole/test-your-skill.html#alexa-simulator-limitations

End-to-end testing: Speech test on a real device

To do language tests on a real device, you can either use https://echoism.io or register a real device as a developer to open the skill there. At echoism.io it is enough to log in with the developer account via Amazon, so that you can make a normal call (with the skill name) by clicking on the simulator.

To test an Alexa device with the developer account, the device must be registered to the same email address. An already registered device has to be logged out at https://alexa.amazon.com first.

Unit Testing: Tests for Javascript/Node.js: Mocha and Chai

This chapter is available in the book.

Tests with the TestFlow-Framework

The Testflow framework can be found at: https://github.com/alexa/alexa-cookbook/tree/master/tools/TestFlow. Written by Amazon developers, it simulates multi-turn conversations, enables interactive input, and delivers accurate output. You can use the tool to test the skill code without having to provide it as a build, because TestFlow is a small dialog simulator. TestFlow is unique in that it does not require a device, browser or network; it simplifies input and output to a minimum.

The rest of this chapter is available in the book.

The Manual for Alexa Skill Development

If the article interested you, check out the manual for Alexa Skill Development on Amazon, or read the book’s page. On Github you can find all code examples used in the book.

Pingback: what is the best smart home system for alexa – Top Smart Home Devices